How do you structure a robust data chain in an asset management company?

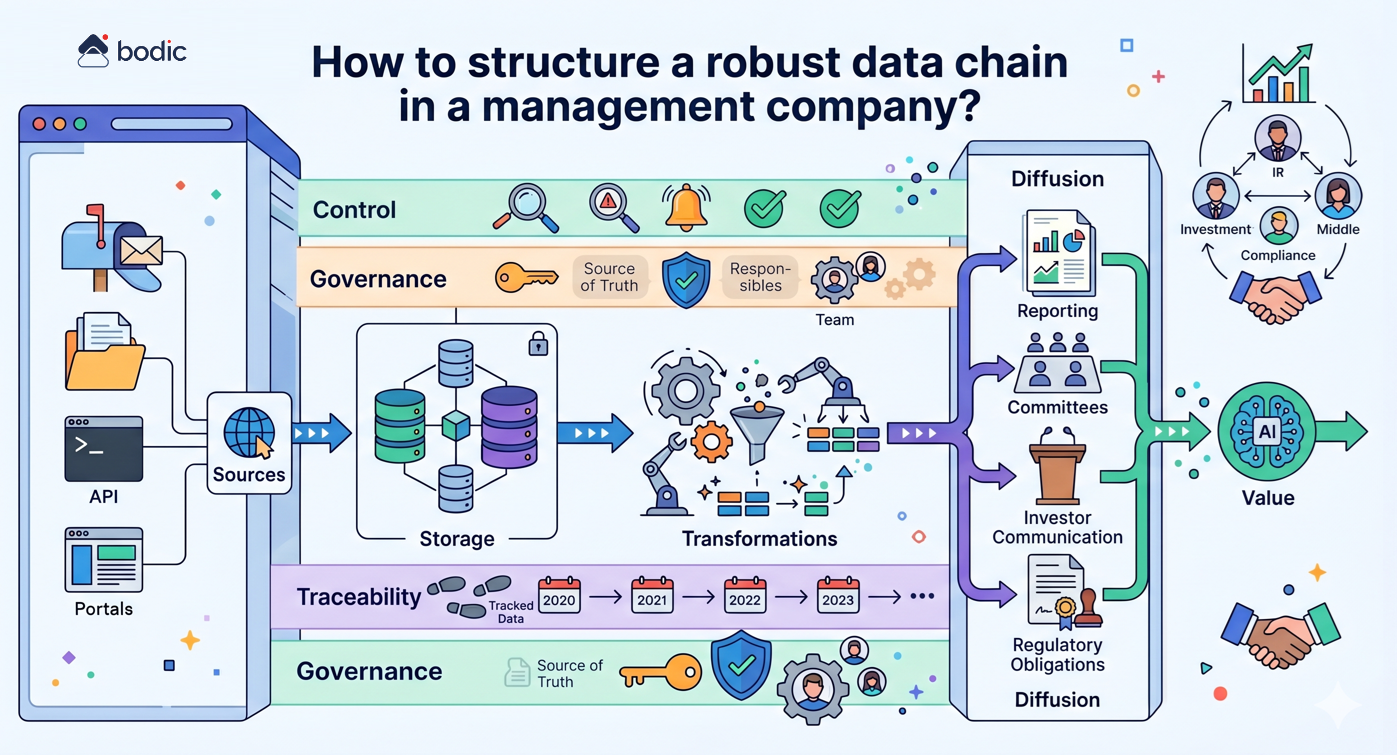

Structuring a robust data chain in a management company involves making the circulation of information explicit, controlled and reliable, from its production to its final use.

In concrete terms, this involves formalizing several key stages: identifying data sources (emails, files, portals, APIs), defining storage spaces (internal databases, data warehouse, business tools), organizing transformations (cleansing, enrichment, consolidation), then structuring distribution to end-users (reporting, committees, investor communication, regulatory obligations).

A robust data chain is based on a few fundamental principles.

First and foremost, each piece of critical data must be clearly defined: an identified source, a reference format, an update frequency and a person in charge. Without this discipline, gaps quickly appear between teams, tools and deliverables.

Next, it's essential to limit redundancy. The multiplication of Excel files, local extractions or parallel versions creates inconsistencies and undermines confidence in the figures. The aim is to converge towards a shared, accessible and controlled "source of truth".

Traceability is also central. Every figure used in a report or committee must be traceable back to its origin, with a history of transformations. This becomes critical as LP and regulatory requirements increase.

Finally, a robust chain includes control mechanisms: validation rules, alerts in the event of anomalies, and human supervision of sensitive points. This framework ensures quality without slowing down operations.

The challenge goes far beyond the technical. A well-structured data chain improves the quality of reporting, facilitates collaboration between teams (investment, IR, middle office, compliance), strengthens credibility with investors and accelerates decision-making.

It's also a prerequisite for the effective deployment of AI tools. Without structured, reliable and governed data, AI amplifies existing shortcomings instead of creating value.

In concrete terms, this involves formalizing several key stages: identifying data sources (emails, files, portals, APIs), defining storage spaces (internal databases, data warehouse, business tools), organizing transformations (cleansing, enrichment, consolidation), then structuring distribution to end-users (reporting, committees, investor communication, regulatory obligations).

A robust data chain is based on a few fundamental principles.

First and foremost, each piece of critical data must be clearly defined: an identified source, a reference format, an update frequency and a person in charge. Without this discipline, gaps quickly appear between teams, tools and deliverables.

Next, it's essential to limit redundancy. The multiplication of Excel files, local extractions or parallel versions creates inconsistencies and undermines confidence in the figures. The aim is to converge towards a shared, accessible and controlled "source of truth".

Traceability is also central. Every figure used in a report or committee must be traceable back to its origin, with a history of transformations. This becomes critical as LP and regulatory requirements increase.

Finally, a robust chain includes control mechanisms: validation rules, alerts in the event of anomalies, and human supervision of sensitive points. This framework ensures quality without slowing down operations.

The challenge goes far beyond the technical. A well-structured data chain improves the quality of reporting, facilitates collaboration between teams (investment, IR, middle office, compliance), strengthens credibility with investors and accelerates decision-making.

It's also a prerequisite for the effective deployment of AI tools. Without structured, reliable and governed data, AI amplifies existing shortcomings instead of creating value.