What new roles are emerging in a fund with AI and Data?

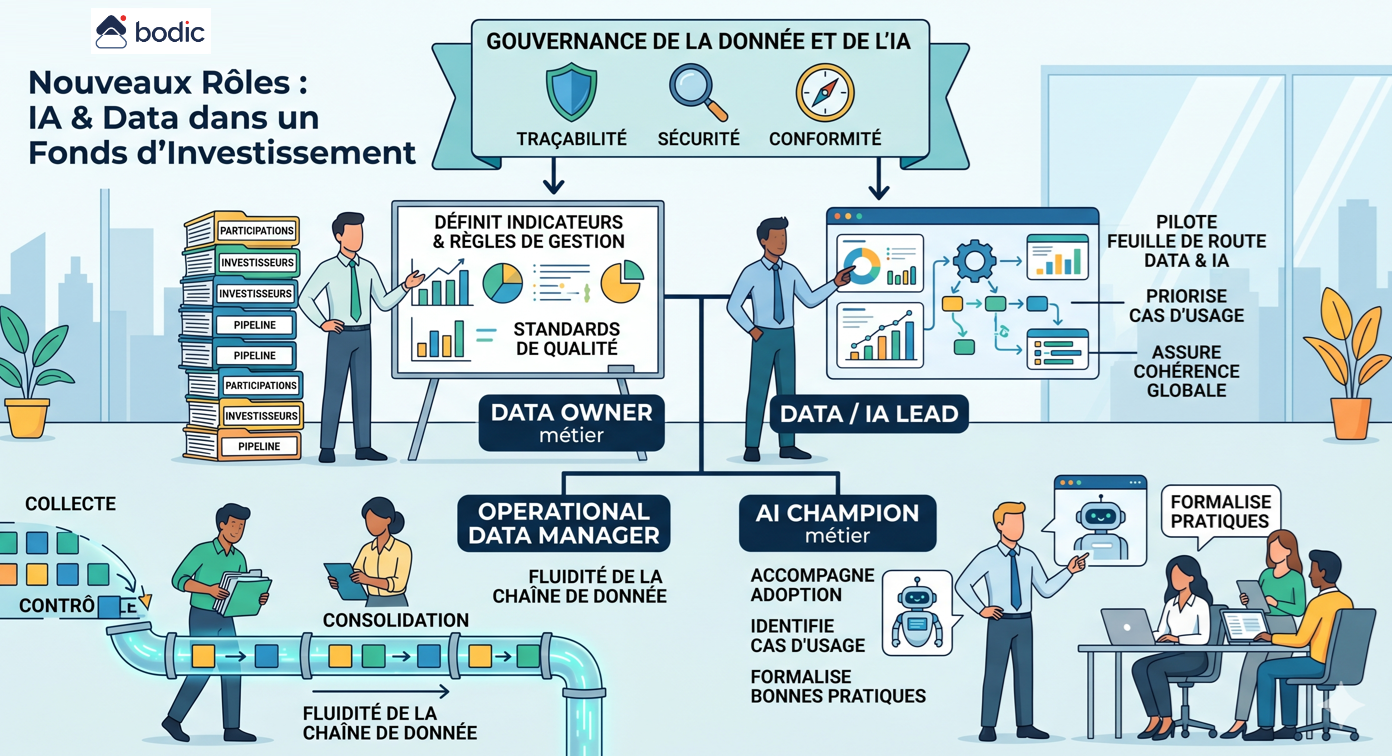

The introduction of AI and Data into a fund doesn't create an abrupt disruption of business lines, but does give rise to new roles around data structuring, governance and operational use.

The first key role is that of business Data Owner. He or she is responsible for a critical perimeter of data, such as shareholdings, investors or the pipeline. He or she defines indicators, management rules, expected formats and quality standards. Without this role, data remains diffuse and difficult to exploit.

The second role is that of Data / AI Lead. He/she steers the fund's Data and AI roadmap, prioritizes use cases, arbitrates tool choices and ensures overall consistency. He acts as a point of convergence between the investment teams, Middle Office, IR and support functions.

A third role is emerging around the Operational Data Manager. Located at the heart of operations, often in the Middle Office, he or she ensures that data flows are correctly collected, controlled, consolidated and disseminated. He or she is responsible for the operational quality and fluidity of the data chain.

With AI, a more specific role of Business AI Champion is also emerging. This profile is not necessarily technical, but has a thorough command of the tools and their uses. He or she supports teams in their adoption, identifies relevant use cases and formalizes best practices, particularly with regard to the supervision and limits of AI agents.

Finally, a cross-functional role for Data and AI Governance is becoming essential. This covers traceability, security, compliance and control. In an LP and regulatory context, the ability to explain a piece of data or a decision becomes as important as producing it.

It's not necessary to set up a dedicated team right away. In most funds, these roles emerge gradually from existing teams. The challenge is to identify responsibilities, clarify scopes of work and structure targeted skills development.

The key point is to ensure that they are rooted in the business. These roles must not be isolated in a purely technical logic, but integrated into the heart of the investment and management processes. It is this proximity that enables data to be transformed into a real performance lever.

The first key role is that of business Data Owner. He or she is responsible for a critical perimeter of data, such as shareholdings, investors or the pipeline. He or she defines indicators, management rules, expected formats and quality standards. Without this role, data remains diffuse and difficult to exploit.

The second role is that of Data / AI Lead. He/she steers the fund's Data and AI roadmap, prioritizes use cases, arbitrates tool choices and ensures overall consistency. He acts as a point of convergence between the investment teams, Middle Office, IR and support functions.

A third role is emerging around the Operational Data Manager. Located at the heart of operations, often in the Middle Office, he or she ensures that data flows are correctly collected, controlled, consolidated and disseminated. He or she is responsible for the operational quality and fluidity of the data chain.

With AI, a more specific role of Business AI Champion is also emerging. This profile is not necessarily technical, but has a thorough command of the tools and their uses. He or she supports teams in their adoption, identifies relevant use cases and formalizes best practices, particularly with regard to the supervision and limits of AI agents.

Finally, a cross-functional role for Data and AI Governance is becoming essential. This covers traceability, security, compliance and control. In an LP and regulatory context, the ability to explain a piece of data or a decision becomes as important as producing it.

It's not necessary to set up a dedicated team right away. In most funds, these roles emerge gradually from existing teams. The challenge is to identify responsibilities, clarify scopes of work and structure targeted skills development.

The key point is to ensure that they are rooted in the business. These roles must not be isolated in a purely technical logic, but integrated into the heart of the investment and management processes. It is this proximity that enables data to be transformed into a real performance lever.